On March 5, 2026, Anthropic published what may be the most methodologically serious study yet on AI's effects on the labor market. Written by Massenkoff and McCrory, it introduces a new measure called "Observed Exposure": a composite of what large language models are theoretically capable of doing and what they are actually being used to do, drawn from real Anthropic usage data. The result is a more grounded picture than most existing research, and a more unsettling one, if you read it slowly enough.

Key Points

- Anthropic's March 2026 study introduces "Observed Exposure," a metric combining theoretical LLM capability with real usage data, giving a more grounded view of AI's labor market impact.

- Computer programmers have the highest observed AI task coverage at 74.5%, meaning AI currently handles nearly three-quarters of their measurable work tasks.

- No systematic unemployment spike has appeared yet for AI-exposed workers since late 2022, but the study's authors caution this is a lagging indicator.

- Workers aged 22 to 25 in AI-exposed occupations have seen their job-finding rate fall by approximately 14% since ChatGPT's release.

- For every 10 percentage point increase in AI task coverage, BLS employment growth projections for that occupation fall by 0.6 percentage points through 2034.

The headline finding is that unemployment has not risen systematically for workers in AI-exposed occupations since late 2022. That is the number most commentators will cite. It is accurate. It is also the wrong place to look.

A Better Measure, and What It Shows

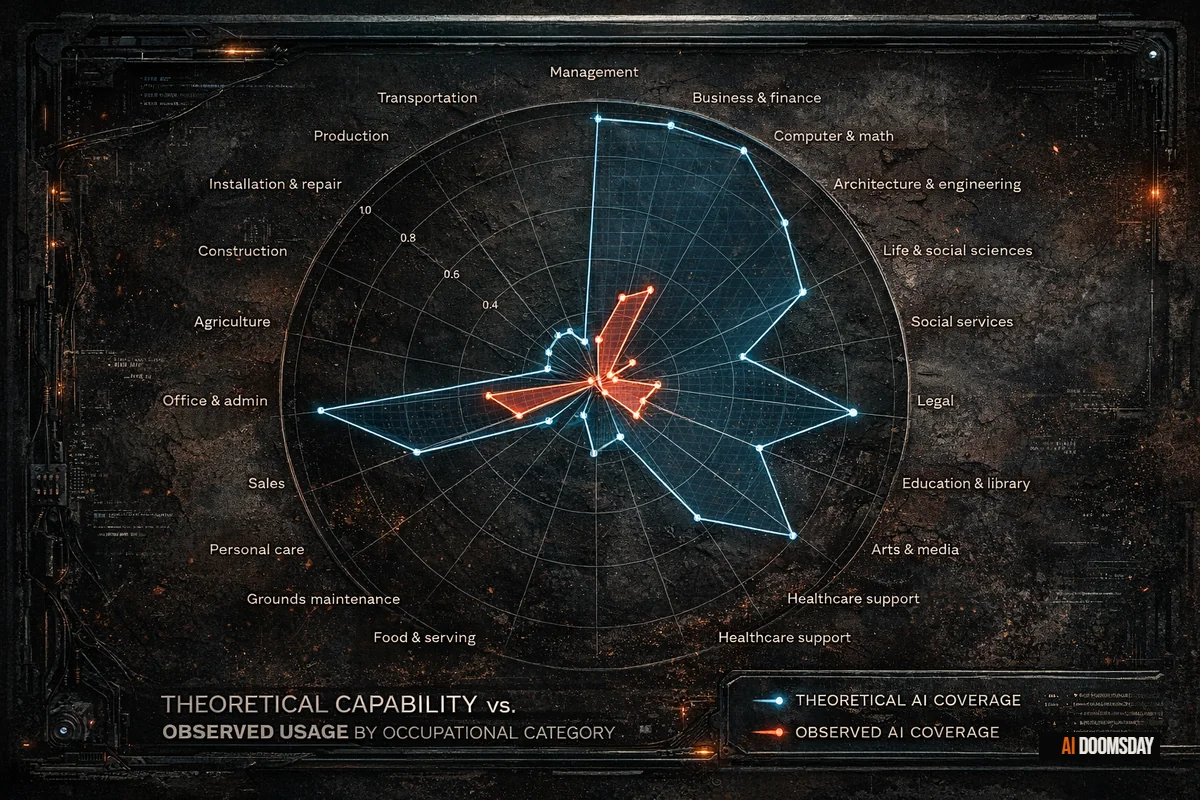

The core contribution of the study is the Observed Exposure metric itself. Prior research, including the influential Eloundou et al. beta measure from 2023, calculated theoretical exposure: which occupational tasks are within LLM capability in principle. Massenkoff and McCrory layer real usage on top of that, weighting automated uses and work-related deployments more heavily than augmentative ones. The result is a metric that measures what AI is actually doing to occupations right now, not what it could do under ideal conditions. Understanding how large language models actually work clarifies why the gap between theoretical and observed exposure exists: capability is not uniform across task types, and the tasks LLMs handle most fluently are precisely those at the productive core of knowledge work.

The gap between the two is enormous. Office and administrative support occupations score roughly 90% on theoretical exposure. Their observed score is far lower. Computer and mathematical occupations score 94% theoretically. Claude, the study reports, currently covers only 33% of their tasks in practice.

THE GAP

Computer and mathematical occupations: 94% theoretically exposed to AI disruption. Observed coverage today: 33%. That gap is not a safety margin. It is a measure of how much runway remains before theory becomes operational. The direction of travel is set. The rate of closure is the only variable.

The most exposed occupations by observed score are: computer programmers at 74.5%, customer service representatives at 70.1%, data entry keyers at 67.1%, medical record specialists at 66.7%, and market research analysts at 64.8%. These are not entry-level, peripheral roles. They sit at the productive core of the white-collar economy.

The Workers in the Crosshairs Are Not Who Most People Expect

One of the study's most striking findings is the demographic profile of highly exposed workers. Compared to those with little or no AI exposure, they are more likely to be female by 16 percentage points, more likely to be white or Asian, far more educated (17.4% hold graduate degrees, versus 4.5% of the unexposed population), and they earn 47% more on average.

The workers most at risk from AI are the well-paid, credentialed, office-based professionals who spent years building expertise in exactly the kinds of structured, knowledge-intensive tasks that large language models handle most fluently. The disruption is not arriving at the bottom of the income distribution first. It is arriving in the middle and upper-middle, in the occupations that formed the primary pathway to economic stability for the college-educated workforce of the last three decades.

"AI is far from reaching its theoretical capabilities in the labor market."

Massenkoff & McCrory, Anthropic, March 2026

The authors frame that gap as a reason for measured assessment rather than panic. They are right to be careful. But the sentence cuts both ways. If theory describes where the ceiling is, and observed usage describes where we are now, then what the study is measuring is the distance between the present and a substantially worse version of these numbers. The gap is not reassurance. It is a forecast.

From the Inside: A Programmer's Reading

I have been writing code professionally for over a decade. The 74.5% observed exposure figure for computer programmers feels directionally accurate to me, and the pace of change in the last twelve months makes it feel like a conservative floor.

The most visible shift is not in what AI can do in isolation. It is in what it has done to the professional hierarchy. The gap between a senior programmer and a junior one has narrowed to a degree that would have seemed implausible in 2022. In most day-to-day work, the difference is no longer obvious. Code review, implementation, debugging, documentation: AI handles a meaningful portion of all of it, and it handles it well enough that the delta between five years of experience and two years of experience has compressed sharply. The gap that remains is at the architectural level, in system design decisions, in judgment calls about what not to build, in understanding how a piece of code will behave under conditions the prompt never described. That is a narrower ledge than it used to be.

The second thing the study cannot fully capture is what companies do with the productivity gains. AI provides genuine daily support. It compresses timelines, reduces friction, handles repetitive scaffolding that used to take hours. But in my experience, companies have responded by raising expectations proportionally, or beyond. What used to require months and multiple people now takes weeks. The efficiency gain does not flow to the worker in the form of lighter workload or more time for interesting problems. It flows to the delivery timeline. The bar rises to absorb whatever AI adds. Amazon's explicit use of AI to justify 16,000 layoffs is the clearest corporate articulation of exactly this logic: the gains go to the delivery timeline and the balance sheet, not to the workforce that helped generate them.

And the models are improving. The gap between what was theoretical in 2023 and what is operational in 2026 is already substantial. Eloundou et al.'s beta measure, which forms the theoretical backbone of the Massenkoff and McCrory study, was calibrated on the capabilities of early 2023 models. The study's authors acknowledge this, noting that the measure likely understates current AI capability. The observed-to-theoretical gap is closing at a pace that academic measurement frameworks, which run on publication cycles, are structurally unable to track in real time.

What the Employment Data Actually Says

The study's most-cited finding is also its most carefully hedged. Massenkoff and McCrory find no systematic increase in unemployment for highly exposed workers since the launch of ChatGPT in late 2022. They are precise about what this does and does not mean.

Unemployment is a lagging indicator. Workers who lose jobs in AI-exposed occupations may find employment in adjacent fields, may reduce their labor supply, or may leave the measured workforce entirely without registering as unemployed. The absence of a headline unemployment spike does not mean nothing is changing. It means the change is not yet large enough, or fast enough, or visible enough to surface in the aggregate statistics that economists typically watch.

The signal the study does find, buried toward the end, is more specific and more significant. Among workers aged 22 to 25, the job-finding rate in highly exposed occupations has dropped by approximately 14% since ChatGPT's release. The result is described as "barely statistically significant," and the authors are appropriately cautious about over-interpreting it. But the direction is consistent with a labor market where firms in AI-exposed sectors are hiring fewer entry-level workers because AI is absorbing the tasks those workers would have been hired to perform. A Senate floor speech on AI and 100 million jobs cited the Stanford "canaries in the coal mine" study showing a 16% employment decline for young workers in AI-exposed fields — a finding that runs parallel to this one and predates it by several months.

THE SIGNAL WORTH WATCHING

For every 10 percentage point increase in AI task coverage, BLS employment growth projections for that occupation fall by 0.6 percentage points through 2034. The hiring door for young workers in exposed occupations is already measurably narrower. Unemployment figures will be the last place this shows up clearly.

The Door Is Still Open. Fewer People Are Getting Through.

The study's BLS analysis reinforces this reading at the occupational level. For every 10 percentage point increase in AI coverage, projected job growth through 2034 falls by 0.6 percentage points. Across the most exposed occupations, that compounds into a material reduction in long-term hiring expectations. The jobs are not being eliminated wholesale. The headcount required to fill them is shrinking.

This is a structural shift that plays out differently depending on where you are in your career. For workers already established in these fields, the pressure is real but gradual: rising output expectations, narrowing salary differentials, fewer promotion pathways as management layers thin. For the workers trying to enter those fields now, the aperture is smaller, the competition is harder, and AI is functioning not as a colleague but as a substitute for the entry-level work that used to pay for the learning curve.

The programmers who entered the workforce between 2018 and 2022 were hired to write code that AI now assists with substantially. The programmers trying to enter today are competing against that assistance. The junior role, defined by its historical function, makes less economic sense to hire for when AI handles most of what defined it. The problem is not that those people lack ability. The pipeline through which they were supposed to develop it is contracting. In the game industry, 87% of educators already report that student placement has been negatively affected — a profession watching its entry pipeline close in real time, for the same structural reason.

What used to require months and multiple people now takes weeks. The efficiency gain does not flow to the worker. It flows to the deadline.

Alberto Russo

Why This Study Matters, and Where It Falls Short

Massenkoff and McCrory are careful researchers. The methodology is sound, the findings are hedged appropriately, and the framing resists the temptation to claim more certainty than the data supports. The study is worth reading in full, and it is worth citing when the argument that AI has "no measurable labor market impact" gets deployed to stall policy discussion.

Its limitation is the same limitation all measurement frameworks share: they describe what has already happened in terms precise enough to be measured. The Eloundou beta was calibrated in 2023. The Anthropic usage data covers behavior up to the publication date. The BLS projections run through 2034 on assumptions built before today's models existed. The study's authors know this, and they explicitly frame Observed Exposure as an ongoing measurement framework designed to be updated. The current version is a baseline, not a verdict.

What the data establishes firmly is this: AI's displacement pressure is real, it is already measurable in hiring patterns for young workers, it is falling disproportionately on educated, well-paid workers in knowledge occupations, and the gap between current observed coverage and theoretical ceiling is closing. The "no unemployment impact yet" finding is not a clearance. It is a reading taken early, before the full weight of the shift has had time to reach the statistics that measure it. The study tells us where we are. The trajectory it describes tells us where this is going.