In March 2026, Senator Bernie Sanders, ranking member of the Senate Health, Education, Labor, and Pensions Committee, delivered a floor speech that received almost no prime-time coverage, no trending hashtag, and no formal response from the administration. In it he laid out a documented case that artificial intelligence is on a trajectory to eliminate close to 100 million American jobs within the next decade. The speech was sourced, structured, and largely ignored.

We think it deserves a serious reading, which means a critical one. Sanders is right about the structural risks. He is less careful in places about the distinction between projection and certainty, and occasionally leans on rhetoric that overstates what AI systems actually are. Acknowledging that does not diminish the substance of the warning. It sharpens it.

Key Points

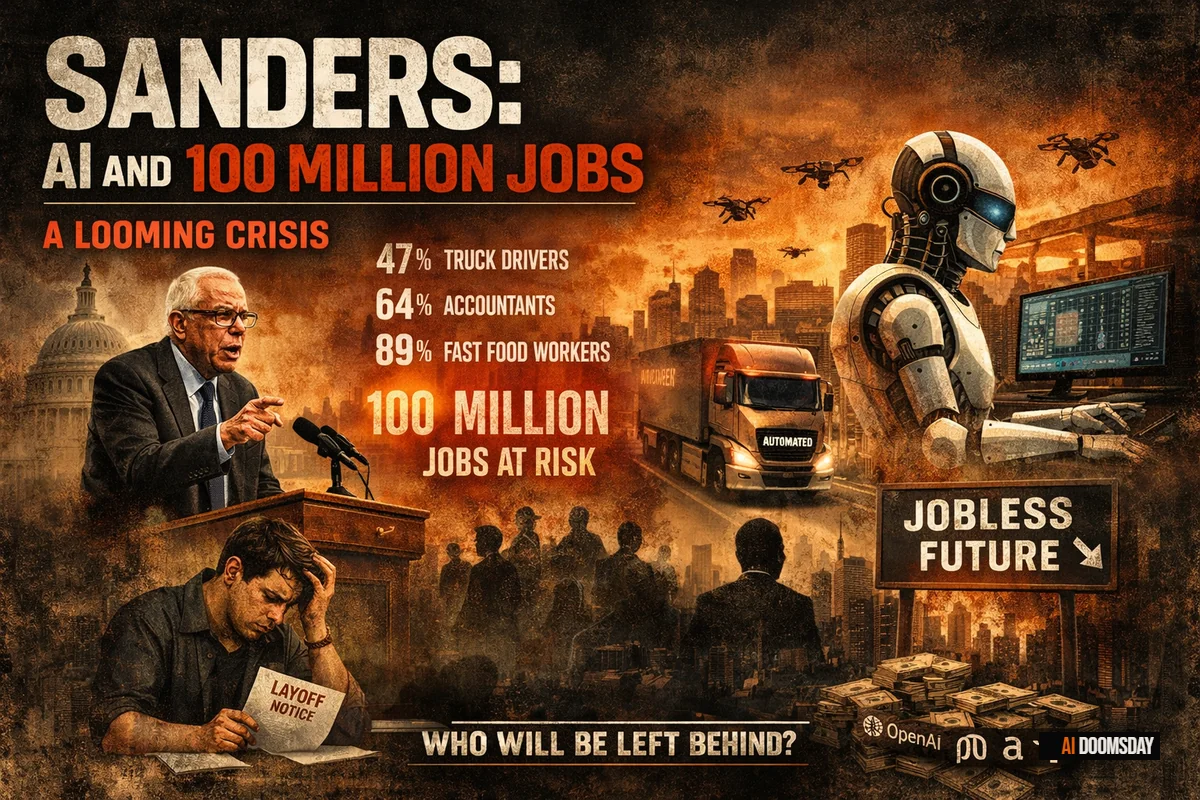

- Senator Bernie Sanders warned from the Senate floor that AI could eliminate close to 100 million American jobs within a decade. The speech received almost no prime-time coverage.

- The Senate HELP Committee projections Sanders cited include 47% of truck drivers, 64% of accountants, and 74% of those in financial operations roles at risk.

- The underlying data in the speech is largely verifiable. Sanders is less precise in places about the distinction between projection and near-certainty.

- No formal administration response was issued. No trending hashtag followed.

The Number: 100 Million Jobs

Sanders cited a Senate HELP Committee projection: close to 100 million US jobs could be eliminated within the next decade. He presented the sectoral breakdown with precision. Forty-seven percent of truck drivers. Sixty-four percent of accountants. Eighty-nine percent of fast food workers. Applied to actual headcounts, that is more than 1.6 million truck drivers, over 900,000 accountants, and roughly 3.5 million food service workers facing displacement.

Our read: the 100 million figure is a projection, not a forecast, and there is a meaningful difference. It represents the upper range of what labor market modelling produces if current capability trajectories continue uninterrupted and economic conditions do not generate compensating job creation. Projections at this scale carry wide confidence intervals. They are useful for forcing political attention (that is a legitimate function), but they should be read as scenario analysis, not as an assured outcome. The honest version of the claim is: under plausible conditions, the displacement could reach this scale. That is alarming enough without claiming certainty the data does not support.

What the sectoral breakdowns do establish, with more confidence, is the shape of the risk. The jobs most exposed share a profile: structured tasks, repeatable decision trees, physical or cognitive workflows that can be described precisely enough to be automated. Truck driving alone employs roughly 3.5 million Americans, and autonomous logistics is not a distant scenario; it is an active commercial deployment in several states. The direction of travel is not speculative. The exact velocity is.

The Acceleration: Where Sanders Is on Solid Ground

Sanders drew on Demis Hassabis, co-founder and CEO of Google DeepMind, who has stated that the AI revolution will be ten times larger in scope and ten times faster in pace than the Industrial Revolution: one hundred times the total impact, compressed into a fraction of the time. He also cited METR, the Model Evaluation and Threat Research group, whose benchmarking shows the length of autonomous tasks that AI systems can complete without human intervention doubling approximately every seven months.

This is where the speech is strongest, and where we agree without significant reservation. The METR data is peer-reviewed and methodologically sound. Hassabis is not making a marketing claim. He is the technical director of one of the most capable AI research organisations in the world, and he is describing what his own systems are doing. The large language models now transforming knowledge work, the systems at the core of this transition, are not approaching a plateau. The capability curve is still bending upward.

The implication Sanders draws is correct: the 100 million job projection is based on what AI can do today, not what it will be able to do by the time any meaningful policy response has been designed, debated, and implemented. Whatever the final number turns out to be, the policy lag makes it worse.

"We are not in a race with China or anyone else to see who is the first to eliminate millions of jobs or the first to build an AI that destroys the planet."

Sen. Bernie Sanders, U.S. Senate, March 2026

The Canaries: Data That Is Already Real

In November 2025, Stanford University published a study titled "Canaries in the Coal Mine." The research tracked employment among young workers in sectors where AI automation is furthest along: computer programming, customer service, data entry, basic legal work. The finding: a 16% relative decline in employment for this cohort in AI-exposed fields.

This is the most empirically grounded section of Sanders' speech, and it matters precisely because it refutes the most common deflection: that AI job displacement is theoretical, future-tense, and therefore not yet a policy emergency. The Stanford data says otherwise. Displacement is already registering in employment figures for the cohort entering the workforce in AI-sensitive roles.

The pattern is visible across sectors. In the game industry, 28% of developers have already been laid off, and the GDC 2026 survey found that 52% of remaining developers now consider generative AI harmful to their profession. Amazon's 16,000 corporate layoffs, explicitly attributed to AI by its own CEO, hit HR operations, internal communications, and mid-level coordination roles, the middle-management layer that millions of college-educated workers spent years qualifying for. These are not projections. They are reported figures.

BY THE NUMBERS

AI task completion horizon: doubling every 7 months (METR, 2025). Youth employment in AI-exposed sectors: already down 16% (Stanford, November 2025). US jobs identified as at risk: ~100 million over the next decade (Senate HELP Committee, 2026). Congressional frameworks addressing this: none with enforcement mechanisms.

Who Is Running This, and Why the Villain Frame Falls Short

Sanders named them directly: Elon Musk, Jeff Bezos, Larry Ellison, Mark Zuckerberg, Peter Thiel. He framed them as the primary architects of a revolution they are prosecuting for profit and power, without democratic accountability and without any binding obligation to the workers their systems are replacing.

The concentration-of-power argument is real and important. A handful of companies (OpenAI, Google DeepMind, Meta, Amazon) control the frontier of AI development. The systems they build and deploy shape labor markets, information environments, and decision-making infrastructure across the entire economy. The absence of a mandatory governance framework governing how they deploy AI against the workforce is a genuine policy failure, and Sanders is right to name it.

Where the framing is too simple is in the motivation it ascribes. The billionaires accelerating this transition are not a unified cabal optimizing for mass unemployment. The dynamics are more mixed: competitive pressure between companies, national competitiveness concerns, genuine conviction about technological progress, and yes, financial incentive. Reducing it entirely to greed misses the structural point. The problem is not that these individuals are unusually malicious. The problem is that the incentive structures they operate within reward acceleration and do not penalize the externalities, and no regulatory architecture currently exists to change that calculus.

OpenAI's stated mission, building "highly autonomous systems that outperform humans at most economically valuable work", is a precise description of the employment risk Sanders is raising. It is also a direct quote from their published mission statement. Musk's own timeline of AI surpassing human capability by 2030 is consistent with the METR acceleration data. The alarm is warranted. The villain narrative is not the most useful lens for designing a response.

Meta is currently constructing a data center in Louisiana roughly the size of Manhattan, consuming the electricity equivalent of 1.2 million homes when operational. The scale of physical investment in AI infrastructure is inversely proportional to the scale of political investment in managing its consequences. That asymmetry is the real story.

Where Sanders Overstates: The Rhetoric of AI Intention

The weakest part of the speech is the passage in which Sanders describes AI systems as capable of lying, deceiving, and blackmailing. The instinct to convey the seriousness of AI risks is right. The framing introduces a category error that undercuts the credibility of everything else.

Current AI systems can generate plausible-sounding falsehoods, a problem the field calls hallucination. They can be used as tools in disinformation campaigns. They can produce outputs that are manipulative, harmful, or deceptive in effect. All of this is real and documented. What they do not have is autonomous intent: the capacity to decide to deceive, to choose a victim, to pursue an agenda. Those are properties of minds, and current systems are not minds.

The distinction matters because it changes what kind of regulatory problem you have. An AI system that can be weaponized for deception by a human actor requires accountability frameworks for the humans and institutions deploying it. An AI system that spontaneously decides to blackmail people requires something closer to criminal law applied to non-human agents. These are different problems with different solutions. Conflating them produces rhetoric that alarms without directing action.

The Competitiveness Frame, and Why Sanders Is Right to Reject It

One of the most clarifying moments in the speech is its rejection of the China argument. The standard response to any call for AI constraint runs as follows: if the United States slows down, China will pull ahead, and American technological supremacy will be ceded to an authoritarian rival. Therefore, any constraint on AI deployment is a strategic gift to Beijing.

Sanders refused the premise: we are not in a race to see who eliminates the most jobs first. That is not isolationism; it is a definitional challenge to what winning means. If the prize is the fastest route to structural unemployment for a hundred million Americans, framed as a victory over China, the competitiveness argument has already evacuated its own meaning. Winning a race to automate your own workforce is a policy failure rendered in the language of national security.

The more useful version of the competitiveness question is this: can the United States regulate AI deployment without ceding AI development? The evidence from the EU AI Act suggests yes, imperfectly, with significant friction, but the act exists and frontier labs are still operating in Europe. Regulation does not equal prohibition. The frame that treats any constraint as surrender is primarily useful to those who benefit from the absence of constraints.

The Costs That Do Not Appear in the Data, and the Real Question

The economic projections around AI job displacement measure what is measurable: job counts, sector exposure, wage levels. They are less equipped to quantify what disappears when tens of millions of people lose not just income but structure, purpose, and social function simultaneously.

One figure from the speech points in this direction. A Common Sense Media poll found that 72% of American teenagers now use AI for companionship, not for homework or research, but for company. This is the social baseline of a generation that has grown up watching institutions visibly fail to deliver what they promised. The adults who may lose their economic footing over the next decade are the parents of those teenagers. These costs will not appear in the Senate HELP Committee report. They will appear in public health data, in crime statistics, in the erosion of civic institutions, and in the political conditions that follow mass economic dislocation at speed.

Sanders is right that Congress is "way, way behind." AI capability is advancing on a timeline measured in months. Legislative processes operate in years, subject to lobbying, electoral cycles, and the basic difficulty of regulating something most legislators cannot technically explain. Every month without a meaningful framework is a month in which deployment advances further into sectors that will be progressively harder to unwind. The EU AI Act, the most comprehensive regulatory framework in existence, is already being tested by frontier systems that outpace the categories it was designed to govern. Regulation at a fraction of the speed of the technology it is supposed to govern is documentation, not governance.

OUR POSITION

Sanders asked the right questions in the wrong order. The productive frame is not "how many jobs will AI destroy?" It is: who controls the systems, who captures the gains, who absorbs the costs, and what governance structures, if any, require those building AI to answer for its consequences. The 100 million number is a forcing function for those questions. Treat it as such.

The technology will continue to advance regardless of what any senator says on any floor. The question is not whether to stop it. The question is whether the societies absorbing it will have any say in how the transition is managed: who bears the cost, who captures the benefit, and what obligations those building the systems carry toward the people whose working lives they are restructuring.

Those are political questions. They require political answers. Choosing not to produce them is itself a choice, one with precise and predictable consequences. The window for those answers is narrower than most people currently believe.