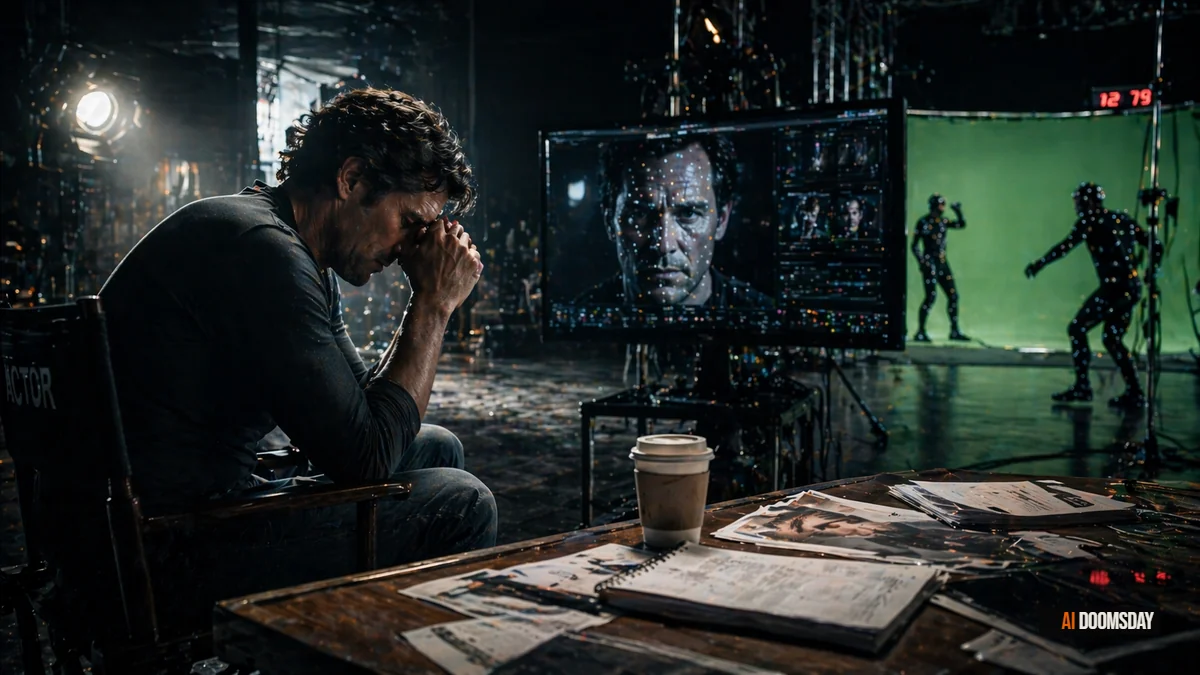

Acting is among the most human of professions. It requires presence, vulnerability, the ability to inhabit another consciousness, and a live relationship with an audience or a camera that no algorithm has been able to simulate at scale. The displacement risk is Low, and the horizon of 2050 reflects that. The screen performance of a trained, experienced actor is not something that AI can replace with any current technology.

But screen performance is only one part of what actors do for a living. The profession is a pyramid. At the top, a relatively small group of recognizable faces command the productions that attract audiences and studios. Beneath them, a vast middle tier of working actors fill supporting roles, provide dubbing and voice work, appear as day players, and populate scenes as extras and background performers. It is in this middle and lower tier that AI is already operating, and where the economic restructuring is most visible and most consequential.

The SAG-AFTRA strike of 2023 made this visible to a wider public. Among its central demands were protections against the use of digital replicas, AI-generated likenesses, and synthetic voice cloning without consent and compensation. The studios agreed to provisions. The technology has continued to advance regardless. What was negotiated in 2023 is already being tested by capabilities that did not exist when the ink dried.

Key Points

- AI synthetic voice tools from companies including ElevenLabs and Replica Studios can now clone an actor's voice from short samples, enabling dubbing, narration, and audio replacement without the original performer.

- AI-generated digital doubles and deepfake face replacement are being used in production to extend performances, recreate deceased actors, and reduce the cost of reshoots, raising unresolved questions about likeness rights and fair compensation.

- Background performers and extras face the most immediate displacement risk: generative AI can now synthesize convincing crowd scenes, reducing or eliminating the need to hire background actors for many production contexts.

- The SAG-AFTRA 2023 agreement established consent and compensation requirements for AI use of actor likenesses, but enforcement is technically difficult and the provisions are already being strained by new capabilities.

- The top tier of the profession, named actors with established audiences and negotiating power, is largely insulated for now; the erosion is concentrated in the working middle and the entry-level base of the pyramid.

What an Actor Actually Does

The public understanding of acting is shaped by its most visible practitioners: film stars, television leads, stage performers whose names appear above the title. That work, embodied performance delivered live or captured on camera, is what most people picture when they consider the profession.

The economic reality is different. Most working actors earn the majority of their income from work that is less visible: voice acting for animation and video games, dubbing for foreign-language releases, audiobook narration, commercial work, corporate training videos, and background performance in film and television. These are the segments where volume matters more than name recognition, where rates are negotiated by category rather than by star power, and where the substitution calculus for AI is most favorable.

Acting also involves a significant amount of craft development work: training, auditions, rehearsal, dialect and accent coaching, physical preparation for roles. AI is beginning to enter this layer too, as a practice tool and a coaching supplement, which changes the economics of skill development without eliminating the need for it.

Where AI Has Already Landed

The most operationally mature AI application in the acting economy is synthetic voice. Tools like ElevenLabs, Replica Studios, and Resemble AI can now generate convincing voice performances from short audio samples. For dubbing, this is transformative. A foreign-language release that previously required hiring local voice actors for every market can now be processed automatically, with the original actor's voice adapted to the target language through AI synthesis.

European broadcasters and streaming platforms began piloting AI dubbing at scale in 2024 and 2025. The quality is uneven, but improving rapidly. The cost differential is not marginal. Hiring voice actors to dub a 10-episode series into six languages represents a significant budget line. AI dubbing at comparable quality collapses that cost by an order of magnitude. The financial incentive is not subtle.

THE SUBSTITUTION

A 10-episode series dubbed into six languages by human voice actors costs tens of thousands of euros in talent fees alone. AI dubbing at comparable quality reduces that cost by 80 to 90 percent. The economic incentive for studios does not require a mandate. It requires a budget meeting.

At the visual layer, digital double technology has advanced to the point where extended screen time can be generated from a limited performance capture session. The most publicized cases involve recreating deceased actors, but the same technology applies to reducing reshoots, extending a contracted performance beyond the agreed shooting schedule, or substituting a performer for a stunt or physically demanding sequence. Each of these use cases displaces work that would previously have required hiring additional talent.

Background performers face the most direct and immediate risk. Generative AI tools can now synthesize convincing crowd scenes, populated streets, and background action without any human performers on set. For productions operating under tight budgets, the option to generate background visually rather than hire 50 extras for a day is already available and increasingly used.

SAG-AFTRA, Likeness Rights, and the Consent Problem

The 2023 SAG-AFTRA strike produced a landmark agreement that addressed AI use of performer likenesses directly. The provisions require consent before an actor's digital replica or voice can be created, prohibit studios from using AI to generate performances that replace covered work without compensation, and establish that an actor's likeness cannot be reused in perpetuity without renewed negotiation.

These protections are meaningful, but they operate in a landscape that makes enforcement technically difficult. Voice cloning requires only a short audio sample, which any public appearance can provide. Face replacement technology can be applied in post-production in ways that are not always disclosed in production documentation. The provisions protect union members on covered productions; they do not govern independent productions, non-union work, or the international contexts where AI dubbing is expanding most rapidly.

The deeper problem is jurisdictional and definitional. What constitutes a digital replica sufficient to trigger consent requirements? At what point does AI-assisted performance become AI-generated performance? These questions were partially addressed in 2023 and are being actively relitigated as the technology outpaces the language of the agreement.

The Middle Tier and the Pyramid Effect

The profession of acting has always had a pyramidal structure. A small number of performers earn very large incomes; a much larger number earn modest ones; and a still larger number earn most of their income from work adjacent to performance while pursuing acting opportunities when they arise.

AI does not threaten the apex of this pyramid in any near-term scenario. Named actors with proven audience draw are not substitutable by synthetic performers in any project where audience recognition is part of the commercial proposition. The economic value of a recognizable face in a leading role is not reducible to the visual representation of that face; it is a function of cultural familiarity, press coverage, and audience trust that AI cannot synthesize from scratch.

The middle tier is a different matter. Supporting actors, recurring television roles, ensemble casts where individual performances are important but no single performer is the commercial draw: these are contexts where AI substitution is technically plausible, economically attractive to cost-conscious producers, and difficult for audiences to detect reliably. The voice acting and dubbing markets, which represent substantial income for working actors outside the top tier, are already being restructured by synthetic voice technology.

Entry-level work, the background performances, day player roles, and commercial spots that actors take to stay active and generate income while building toward larger roles, is the most immediately exposed segment of the market. This work was never high-margin for the performers doing it. It was a way to stay working, build credits, and maintain presence in the industry ecosystem. When AI can replace it at a fraction of the cost, the entry points to the profession narrow in a way that will be felt most acutely by actors who are still building careers rather than those who have already built them.

How to Use AI as a Working Actor

The actors who understand what AI can and cannot do are better positioned to use it purposefully. The tools that threaten parts of the market can also be useful to working performers who approach them correctly.

ElevenLabs and similar voice platforms can be used, with appropriate licensing and consent structures, to prototype voice work, develop character voices for auditions, and explore dialect variations without the cost of repeated studio sessions. Runway and Pika Labs allow actors and directors to previsualize scenes before expensive shoots, which can actually create more opportunities for actor input in pre-production. AI accent and dialect coaching tools can supplement working with a human coach, particularly for self-directed preparation.

The more important adaptation is understanding which work is defensible and which is not. Live performance, physical presence, the relationship between a performer and a live audience or a camera operator in a real space: these are not things that AI is close to replacing. The actors who invest in what AI cannot do, in physical craft, in live performance skills, in the ability to generate genuine spontaneity in a collaborative production environment, are building toward something durable. Those who rely primarily on categories of work that AI is already pricing out should be making different choices now.

What I Think

The 2050 horizon and Low risk classification are probably right about the apex of the profession and probably wrong about the middle. The compression of the working actor market is not a future risk. It is a present structural change that the industry is managing through a combination of negotiated protections, technological optimism, and selective attention to where the disruption is actually landing.

What concerns me is not the extinction of acting as a craft. Audiences will continue to want human performers for as long as human performers can do things that synthetic performers cannot. That is a long horizon. What concerns me is the narrowing of the pathways into the profession. The extras and background performers who are being replaced by generative AI were not just filling screen time. They were participating in the industry, developing relationships, learning how productions work, and building the kind of proximity to the work that creates opportunities.

When those entry points close, the profession does not disappear. It contracts toward a smaller group of people who were already established when the contraction began, and becomes harder to enter for everyone who was not. That is the pattern across every profession where AI is reducing volume work at the base of the pyramid. It is worth naming clearly, even when the headline risk number remains Low.

"AI cannot yet perform. It can, however, replace every form of acting work that does not require an audience to care who is doing it, and that is a large portion of the market."