The memory inside your next laptop, your next phone, your next game console will not reach you. A growing share of it has already been claimed. The hyperscalers, the cloud giants, the infrastructure arms of companies whose products sit in your pocket and on your desk, are consuming semiconductor capacity at a rate the industry has no historical equivalent for. What remains after they are done is what the consumer market divides among itself.

Key Points

- Data centers will consume 70% of all high-end memory chips manufactured globally in 2026, driven by AI infrastructure demand.

- High Bandwidth Memory chips for AI GPUs require approximately four times the wafer surface area per gigabyte compared to standard consumer memory.

- PC prices have risen roughly 20% year-on-year. Smartphone memory costs are following the same trajectory.

- The shortage is projected to persist through at least 2027, with no significant new fabrication capacity coming online before then.

- Consumers who do not use AI are paying higher prices for electronics because AI companies are absorbing the available supply.

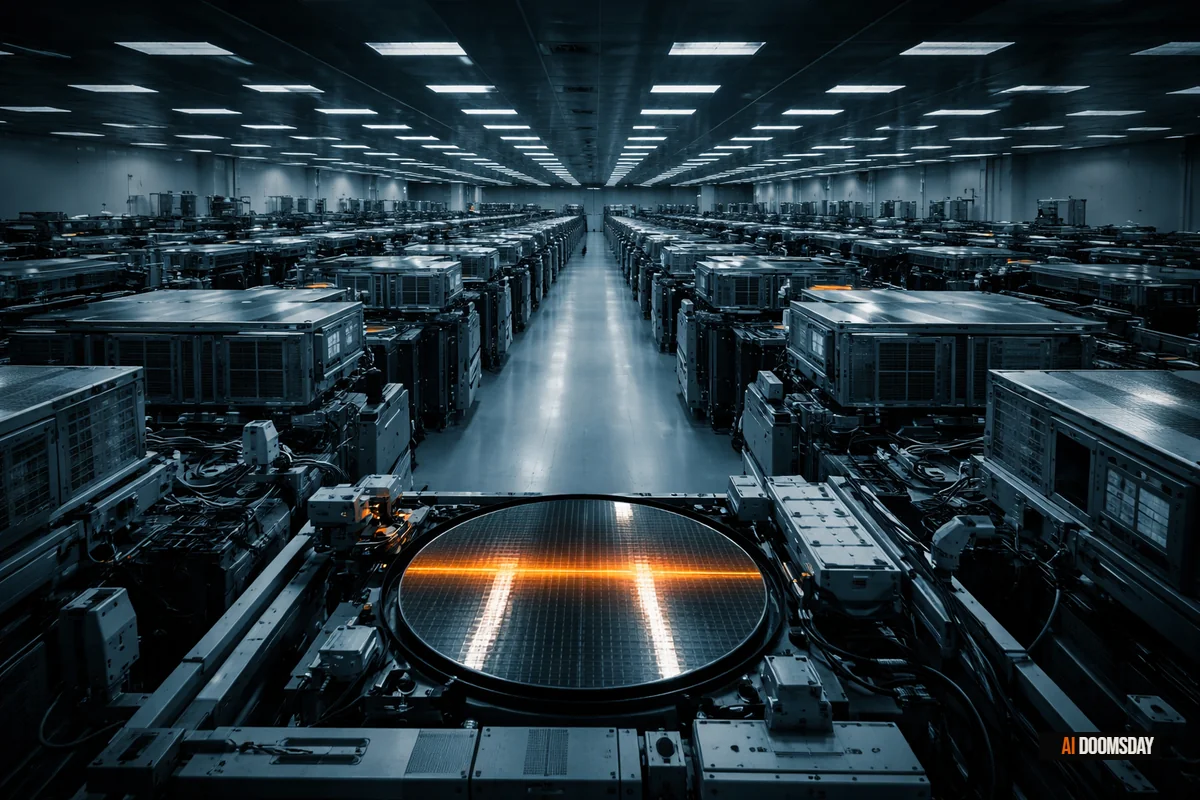

In 2026, data centers will account for roughly 70% of all high-end memory chips manufactured globally. That number is the result of a confluence of factors, but the central one is this: the AI buildout requires memory architectures that are enormously expensive to produce. High Bandwidth Memory, the chip variant that powers the GPU clusters inside AI data centers, requires approximately four times the wafer surface area per gigabyte compared to the DDR5 chips that go into ordinary computers. The physical capacity of semiconductor fabrication plants is finite. When 70% of that capacity is redirected, the effects are not abstract.

The Wafer Math

Understanding the 70% figure requires a brief excursion into semiconductor production. Memory chips are fabricated on silicon wafers. A wafer can produce a fixed amount of silicon surface area. The question is which chips you cut from it.

HBM, the memory variant that AI infrastructure demands, requires roughly 4x the wafer area per gigabyte compared to DDR5. GDDR7, used in gaming hardware, requires approximately 1.7x. When Samsung, SK Hynix, and Micron, the three companies that collectively control global DRAM production, redirect their cleanrooms toward HBM and enterprise-grade components, the output available for consumer applications does not simply decline proportionally. It contracts dramatically, because each wafer that goes to HBM production yields far fewer gigabytes of memory than the same wafer allocated to consumer DRAM.

Global DRAM supply is projected to grow by approximately 16% in 2026. In any other year, that figure would look healthy. In the context of AI infrastructure demand that is compounding faster than production can scale, it is not enough. AI cloud infrastructure alone will consume roughly three exabytes of the approximately 40 exabytes of total global memory capacity produced this year. The concentration of that demand, and the physical cost of serving it, is where the consumer market absorbs the pressure.

SILICON MATH

HBM, the memory architecture powering AI data centers, requires 4x the wafer surface area per gigabyte compared to DDR5. GDDR7 requires 1.7x. When manufacturers pivot cleanroom capacity toward AI-grade chips, consumer memory output contracts far faster than the headline reallocation figures suggest.

What the Consumer Market Is Actually Facing

The consequences are already visible across every major consumer electronics category. They are not uniform, but they are consistent in direction.

In the PC market, Lenovo, Dell, HP, Acer, and ASUS have all signaled price increases of 15 to 20% industry-wide. Some vendors are shipping machines without RAM preinstalled, a practice last seen at scale during the chip shortages of 2021 and 2022. Spec configurations that previously defaulted to 16GB of RAM are shipping at 12GB. Configurations that offered 256GB of storage are moving to 128GB to hold price points. IDC projects the PC market will contract by between 4.9% and 8.9% in 2026.

The smartphone market is following a parallel trajectory. Shipments are forecast to decline by 12.9% in 2026, the steepest drop in over a decade, while the average selling price rises 14% to a record $523 per unit. The paradox is structural: as volume falls, the devices that do reach market cost more, because manufacturers are absorbing higher component costs and passing them forward. Mid-range and budget-tier models are being quietly discontinued or downgraded to remain viable. The same dynamic is propagating through tablets, smart TVs, and gaming hardware.

Gaming consoles and dedicated GPU products are caught in a double bind: they depend on GDDR7, which faces its own wafer-area penalty, while competing for fabrication priority with the HBM production lines that semiconductor plants are prioritizing above all else. The gaming market, already under pressure from the economic cycle, is looking at another hardware generation defined by constrained availability and elevated launch prices.

"The memory market is at an unprecedented inflexion point, with demand materially outpacing supply."

Francisco Jeronimo, VP Data & Analytics, IDC

Cyclical or Structural: The Question That Matters

Every major component shortage in the past decade has eventually resolved. The 2021 chip crunch eased. DDR4 prices normalized. Consumers waited, paid a premium for a cycle, and moved on. The historical pattern has been cyclical: demand spikes, supply catches up, equilibrium returns.

IDC is not characterizing the current situation as cyclical. Its analysts have described the present reallocation as "not just a cyclical shortage, but a potentially permanent, strategic reallocation of the world's silicon wafer capacity." That framing has specific implications. If the reallocation is permanent, the consumer market is not waiting for a correction. It is adapting to a new baseline, one in which a growing share of the world's memory production is structurally committed to AI infrastructure and no longer available for commodity consumer applications.

The shortage is expected to persist through at least 2027. New HBM-capable fabrication capacity requires years of lead time and capital expenditure in the billions. The hyperscalers have already signed the offtake agreements. The manufacturers have already committed the capacity. The consumer market is working with what is left after those commitments are honored.

SCALE OF THE BET

Microsoft, Google, Meta, and Amazon are projected to spend approximately $600 billion in combined capital expenditure in 2026. A significant share of that flows directly into memory chip procurement and data center infrastructure, locking in semiconductor commitments years in advance.

The Disappearing Entry-Level Market

The downstream consequence that has received the least attention is also the most consequential at scale. IDC projects that the entry-level PC market will effectively disappear by 2028. The language is precise and its implications are significant: a product category that serves first-time buyers, students, lower-income households, and markets in the developing world is being priced out of existence by the cost pressures generated at the top of the production stack. The mechanism is structurally identical to what Senator Sanders documented in his Senate speech on AI and 100 million jobs: gains from AI infrastructure concentrate at the top, costs distribute across the widest possible base, and the people with the least leverage absorb the most.

Affordable consumer electronics are not a luxury. They are infrastructure. The global digital divide has narrowed over the past two decades largely because the cost of computing hardware declined reliably and consistently. A mid-range Android phone from 2024 at $200 represented extraordinary capability relative to its price. The dynamics now in motion work in the opposite direction: prices rise, specs fall to maintain margins, and the floor of the accessible market rises with them. The people who can least absorb price increases are the ones most exposed to entry-level contraction.

"DDR5 RAM should be treated as a retirement investment at this point."

Windows Central, March 2026

The Same Logic, Applied Again

There is a companion story to this one. Meta's Hyperion data center in Louisiana is about the energy cost of AI infrastructure: how the power demands of a single facility can consume 20% of a state's electricity grid, financed in part through rate increases borne by communities that had no seat at the table when the contract was signed. The silicon story is the same logic applied one level up the supply chain.

The companies spending $600 billion on AI infrastructure in 2026 are the same companies whose products sit on every consumer's desk, in every pocket, and on every wall. The capital they are committing to data center build-out is, in part, capital that will not flow into consumer hardware cost reduction. Amazon alone has committed $200 billion to AI infrastructure while simultaneously cutting 16,000 corporate jobs — the same company whose consumer devices depend on the memory market it is helping to strain. The semiconductor capacity they are consuming is capacity that will not produce the chips that go into affordable phones, cheap laptops, and accessible smart home devices. The mechanism is not malicious. The outcome is not accidental. It is the predictable result of concentrated demand meeting finite physical capacity, with the costs of that concentration distributed across the widest possible base.

The energy cost of AI lands on ratepayers in Louisiana. The hardware cost of AI lands on every consumer in every market who buys a device. Both are externalities. Both were decided without a public vote. The question worth asking, as the buildout continues and the capacity commitments extend further into the decade, is whether any regulatory framework exists, or is being designed, to account for the cumulative cost of choices being made at the infrastructure layer of the global economy. The answer, at the moment, is no.